Four Side Projects with AI, and the Same Wall I Hit Four Times

Four Side Projects with AI, and the Same Wall I Hit Four Times

It was about one in the morning.

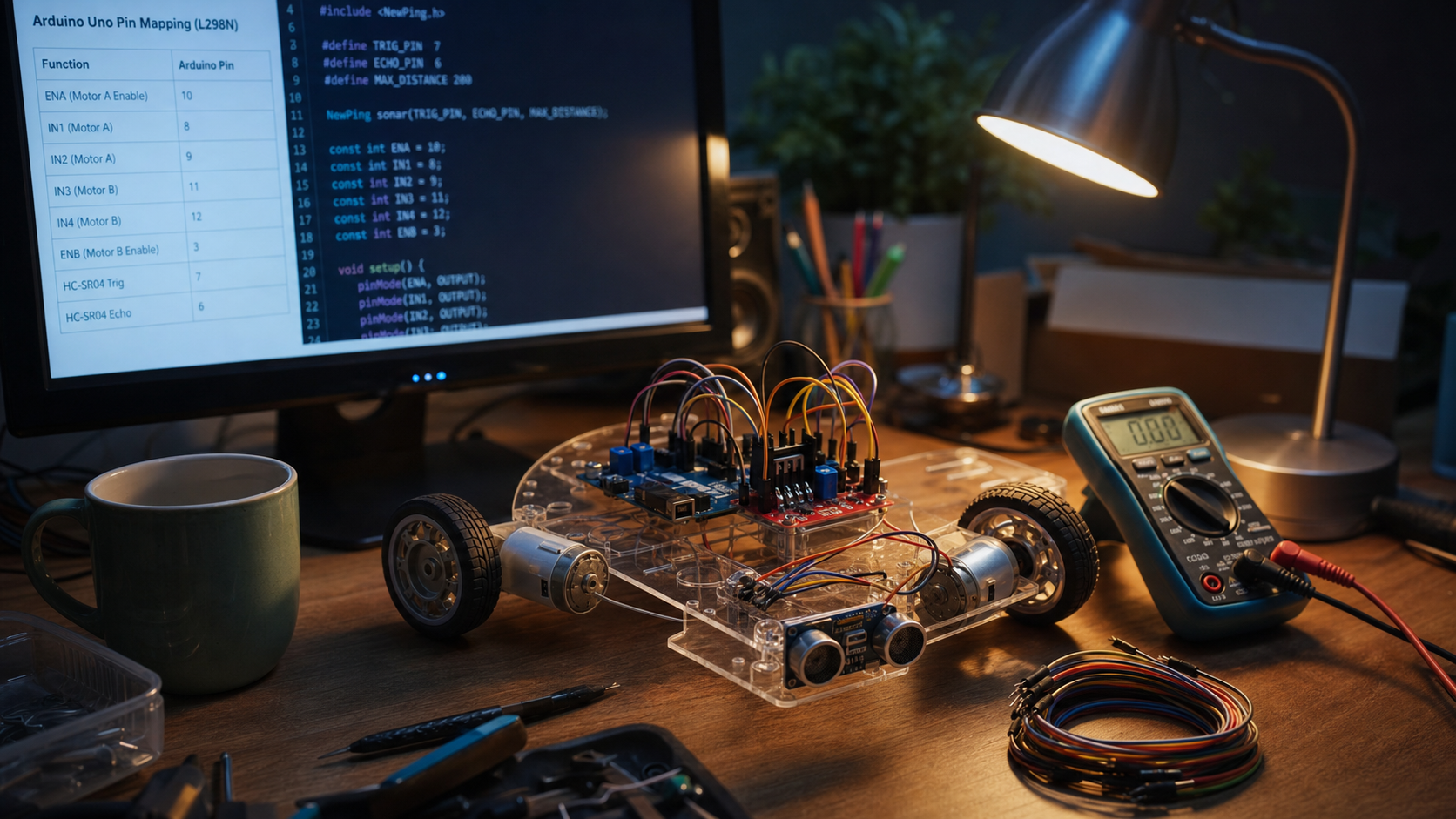

A half-assembled acrylic robot chassis sat on my son's desk. I'd just uploaded an Arduino sketch that Claude Code had written for two DC motors driven through an L298N H-bridge with PWM. One wheel spun in one direction. The other sat still, twitching occasionally. My son leaned over my shoulder asking "what's wrong with it?" while I stared at the serial monitor.

The code was clean. That wasn't in doubt. Standard L298N pattern, library calls in order, PWM values within range. It just didn't work.

I looked at the pin map again. IN1 on pin 8, IN2 on pin 9, ENA on pin 10. IN3 on pin 11, IN4 on pin 12, ENB on pin 13. Exactly what I'd given Claude.

Two hours later, I figured it out. ENA on pin 10 was driving Timer1, and the same sketch was pulling in the Servo library, which also uses Timer1 on the AVR. They were fighting over the same hardware timer. The code Claude wrote was correct in isolation. It just didn't know my project had a servo on it.

The strange thing was, I didn't get angry. My head just got a little colder.

Was this a failure of the AI? Not really. Pin 10 mapping to Timer1 is in the datasheet, and Claude knows it. AFMotor occupying Timer1 and Timer2 is a known footgun, and Claude knows that too. But the code that came out at one in the morning didn't know there was a servo on my board, didn't know I could move ENA elsewhere, didn't know that pin 13 doesn't even support PWM on a Uno. The model could have known all of this. The session didn't.

Three more times after that, I stopped in roughly the same spot. The domains were nothing alike. Korean Four Pillars fortune-telling, tarot, a visual novel, and the robot. With domains that far apart, the walls should look different. They didn't. For a while I filed each one under its own reason: the first was domain complexity, the second was decision fatigue, the third was the inherent difficulty of modeling emotion, the fourth was the embedded-systems mess. It took putting the four next to each other before I noticed they were the same shape.

This is a post about those four walls, and what changed once I realized they were the same one.

Saju was not the easy domain I expected

When I picked saju as my next project, I underestimated it.

Saju is the Korean version of the Four Pillars of Destiny, a system that reads someone's birth date and time into a four-column chart of Heavenly Stems and Earthly Branches. I had just finished a tarot project. Seventy-eight cards, 156 interpretation entries split between upright and reversed, a handful of reading layouts. The whole thing fit comfortably in one session of conversation with an LLM. I assumed saju would scale similarly. Ten Stems, twelve Branches, twenty-two characters. Sounds smaller than 78.

That was the first mistake.

I dropped a one-liner into Claude: "I want to build a saju app. Got any data on it?" In under ten minutes the entire shape of the domain came back. The sixty-cycle stem-branch combinations, the five-element generation and overcoming relationships, the ten-god classification, the hidden stems inside each branch, the twelve-stage life-cycle table, the various combination-clash-punishment-harm interactions between characters. What each piece is, how they connect, how I might represent them in code.

For a minute I was impressed. Saju takes weeks to self-study, and here was a usable map in ten minutes. If side projects could compress that much, almost any domain was within reach.

Then I started writing code, and the picture changed.

Benchmarking existing saju apps, I ran into something absurd: the same birth date and time produced different charts in different apps. The hour pillar was off. Sometimes the month pillar was off. In a couple of cases even the day pillar was off. I thought I was entering wrong values. I tried again. Same result. The eight characters that the entire chart hangs on were not the same eight characters across apps.

In normal programming, the same input always produces the same output. In saju, that assumption broke. There were three reasons.

True solar time. Korea's standard time is anchored at longitude 135° east, but Seoul actually sits near 127° east. That offset comes out to roughly thirty minutes. Saju cuts time into two-hour blocks for the hour pillar, so thirty minutes can shift which block you land in. Apps that apply the correction and apps that don't end up with different hour pillars for the same person.

The midnight question, called yajasi. If you're born between 11 pm and midnight, does your day pillar belong to that day or the next? Different schools answer differently. Day pillar drives the day-stem, which is the anchor for the entire ten-god analysis. Move it by one, and the interpretation shifts everywhere.

Daylight saving. Korea ran summer time briefly in the 1950s and 60s. People born in those windows have a birth time that needs to be wound back to real solar time. Some apps do it. Some don't. Some that do it disagree on the exact dates.

There was one more thing. The month pillar in saju is not driven by the lunar date. It's driven by the twenty-four solar terms, which are calculated astronomically and only become precise to the minute when you use the official ephemeris. Korea's KASI (Korea Astronomy and Space Science Institute) publishes those values down to the minute. Until I plugged that in, the month pillar for someone born on the day of a solar term boundary kept coming out slightly wrong.

None of this was the part that hurt. The part that hurt was that explaining it to Claude once and getting good code back didn't end the problem. The next morning, a new session, and I was typing it all out again: "this project applies true solar time correction, treats yajasi as same-day, uses KASI minute-precision data for solar terms, and only back-corrects daylight saving for the 1950s and 60s window." First time, fine. Fifth time, exhausting.

The fix ended up being documents. A docs/ folder with four files: architecture, domain model, calculation engine, AI interpretation. The domain model alone grew to thirty-five pages. For a side project that's a lot of paper. But that's what let me start a new session with @docs/ and have the model arrive already understanding the whole map.

Without the docs, context setup at the start of each session ran about fifteen minutes. With them, it took one or two. Saju went through dozens of sessions. The bigger win wasn't the time saved, it was the consistency. When I explained things verbally, I always left something out. When I loaded the documents, nothing was missing.

The documents also changed the shape of the code. When I asked the model to write the ten-god analysis function later, it already knew the return type of the day-stem calculation and the five-element mapping table. So the interfaces lined up by default. Without that shared context, the same concept tended to show up under two different names across functions: dayStem in one place, mainStem in another. Once the canonical naming sat in the documents, that kind of drift stopped happening.

So this was the first wall. Strictly speaking it wasn't a wall, it was the slow accumulation of repeating the same explanation until the cost stopped being bearable. And the reason it accumulated wasn't that the AI was weak. It was that my project's decisions lived outside of it.

Tarot wasn't 78 cards, it was 156 interpretations

Tarot came before saju.

It started from a single line: "I want to build a web app for reading tarot." No PRD, no spec doc. Just a vague interest and a sense that it would be fun on the web.

The spec settled itself in about ten minutes. Three questions came back from Claude, I answered them, and that was the project. Reading modes: one-card, three-card, and the Celtic Cross. I cut the free-choice mode where the user picks how many cards. For someone new to tarot, "how many cards do you want?" is not friendly, it's a tax. Interpretation: prewritten static text instead of calling an LLM API on every reading. Stack: React + Vite + TypeScript. The name "Tarot Master" took about five seconds to land on.

This part felt the same as saju. A small domain, AI sketches the shape fast, lays the decision axes out where I can see them, and my priorities sharpen. Smooth so far.

Then two things caught my foot.

The first was the actual workload. I started with "tarot has 78 cards" in my head, and learned mid-project that each card has separate upright and reversed meanings. Not a simple good-versus-bad split either. Upright is the card's energy expressed naturally; reversed is that same energy blocked, overdone, or twisted. The Magician reversed isn't "powerlessness," it's "deception, wasted talent, manipulation" — ability used in the wrong direction. So instead of writing 78 entries I was writing 156, and each pair had to be coherent.

The second was copyright. The most familiar deck is Rider-Waite-Smith. The 1909 original is public domain. The 1971 recoloring by US Games Systems is not — it's a derivative work with its own copyright, and most of the tarot imagery floating around the web is the 1971 version or descendants of it. The color tones differ subtly and a few details have been touched up. Unless you know what to look for, you can't tell. Grabbing one of those by accident is a copyright problem waiting to happen.

Both of these came up during the same conversation thread. Card count led to image sourcing led to copyright led to original-versus-recolor. Reaching the same depth through search would have meant opening ten tabs and stitching the pieces together myself.

The wall came somewhere else.

Who writes the 156 interpretations? If I write them by hand it takes forever. If I have an LLM generate them on demand at runtime, the API bill scales with traffic and the side project starts costing money before it earns any. There's also a UX problem: flipping a card and waiting two or three seconds for the result breaks the moment. The instant feedback of turning a card is part of why tarot works at all. A loading spinner pops the spell.

So I went with static prewritten text. Generate it once with the model, edit it by hand, ship it as data. The decision was right. What I want to point at is how the decision got made.

If I had asked the model "what should I do here?" the answer would have been "both are viable, here are the tradeoffs." That's not wrong, but it's not useful either. The decision only crystallized once I handed over my project's constraints: API cost, response latency, the importance of the flip moment. The model lays out options well. It can't pick between them until your priorities live inside the prompt.

This was the second wall. The domain was small enough that context collapse didn't bite. A different shape of friction showed up instead. The choices were on the table, but the model didn't tell me which one was mine until I named the constraints out loud.

On the surface this looked different from saju. Saju was "repeating the same explanation costs me time." Tarot was "my priorities aren't in the room." But step back and they're the same. Outside the boundaries of my project, the model can't give me the right answer. Two faces of the same spot.

The visual novel: thirty minutes of code, and I still don't know about the feelings

The third project surprised me.

I asked my son if he wanted to build something together. His eyes got wide: "I can do that?" I asked what genre he liked. I was bracing for shooters, puzzle games, maybe Minecraft.

He thought for a second and said: "Dating sim."

I was caught off guard. I have no idea where he picked that up — from friends, from the internet, from somewhere — but he was serious about it. The answer stuck in my head longer than I expected. Some of that was just surprise at a twelve-year-old girl's taste, but more of it was the part of me that thinks like a developer reacting to the shape of the problem.

I dropped the usual opening line into Claude: "I want to make a visual novel. Where do I start?" The reply mentioned Ren'Py, which I'd never used. A Python-based visual novel engine — more of a VN-authoring toolkit than a game engine, really. Dialogue, choice menus, branching, and UI are all there out of the box. You edit one script.rpy file and the game runs.

The minimum example was this:

define e = Character("Eileen")

label start:

scene black

e "Hello."

e "First visual novel test."

menu:

"Option A":

jump route_a

"Option B":

jump route_b

label route_a:

e "You picked A."

return

label route_b:

e "You picked B."

return

That's the whole thing. label for scenes, menu for choices, jump for branching. Ten lines and you have a working VN. Thirty minutes to understand the engine.

The speed was almost suspicious. As a side project this was an ideal starting point. The code is barely there, so the work goes into the story. And story-and-scenario design is exactly the kind of thing an LLM ought to be useful for.

That was the assumption. It didn't survive.

I asked Claude how to build the affection system. I was expecting the obvious: a number that goes up and down based on choices. What came back was different. The model suggested layering the emotion model. Separate disposition, in-the-moment feelings, and relationship state. Don't treat "love" as one variable. Treat it as a composite of trust, intimacy, and fear of loss.

That caught me. I knew the model could surface domain structure, but watching it cleave the emotion model along these specific seams was unexpected. Left to myself I would have started with a single affection score, 0 to 100, plus or minus five per choice, and called it done. Splitting trust and intimacy and fear-of-loss into three variables made it possible for the same "love" to play out as different relationships depending on which axis was high. Trust high but intimacy low feels distant. Intimacy high but fear-of-loss high feels clingy. Same word, different ending.

So I pushed further. A few days later I told my son: "Let's make a visual novel. The story changes depending on what you pick."

"I get to pick?"

"Yeah. Different choices, different story."

"What if I pick a bad one?"

"You might end up sad."

"Why?"

I didn't have an answer ready.

Why would you feel sad? It's a made-up character, a made-up story. Why do real feelings show up there? The question he tossed off was, in fact, the central question of the whole project.

So I asked Claude the same thing. "Why do people feel real emotions in fictional relationships?" The reply landed somewhere around "because emotional immersion is possible" and "because choices make things meaningful." Not wrong. Not the answer either.

That's where I stopped. The code wasn't blocking me — Ren'Py handled that. The domain surface wasn't blocking me — the model had laid out the emotion architecture cleanly. The wall showed up one level below the surface. The moment I asked "but why does this work on a person," the answers got flat.

At first I read that as a tool limitation. Better models, problem solved. After a while I started to suspect it was something different. The question was a different kind of question. Some questions answer well from information. Others only answer well from memory and lived experience. Pushing both through the same tool was always going to leave one side hollow.

With the robot, the same foot fell into the same hole

The fourth project was the robot.

This spring I asked my son: "Want to build a robot with me? Camera, sensors, an AI that decides what to do with what it sees." He thought for two seconds and said "let's go." That evening he was on YouTube finding robot videos on his own. The next day he announced he was taking the body.

Roles set themselves. He was hardware. Assembly, wiring, sensor mounts, the chassis. I was software. The local LLM server, the coding agent, the comms layer. Together we'd drive the agent in natural language. It turned into a four-month project.

The LLM ran locally on Macs at home, not on a cloud API. Anything that needs realtime decisions on a robot can't afford a round trip to the internet. The same LLM running on the home LAN cuts that latency to almost nothing. Three Macs in rotation: a Mac mini M1 with 16 GB, a Mac mini M4 with 24 GB, and a MacBook Pro M4 Pro with 24 GB. Apple Silicon's unified memory fits local LLM inference better than I'd expected.

That part went smoothly. Then came the one-in-the-morning incident I opened the post with.

ENA on pin 10, Timer1 conflict, two-hour debugging session, one wheel running, one wheel not.

Worth pausing on it for a second time. As the two hours ended, the thing that crystallized for me was this: the AI didn't fail. The AI didn't lack the knowledge either. All the information was available to it. The session just didn't have the information yet.

So I started carrying the information around with me, in a file called CLAUDE.md. Ten lines to start with. Just the pin map. After the Timer1 conflict I added a "library choices, with reasons" section. We don't use AFMotor; it takes Timer1 and Timer2. We do use NewPing; it does async pings so the main loop doesn't stall. I added the reasons, not just the choices. "Don't use AFMotor" by itself doesn't stick — the next session could re-introduce it. "Don't use AFMotor because of the timer conflict" sticks.

I moved ENA from pin 10 (Timer1) to pin 5 (Timer0). I moved ENB from pin 13 to pin 6 (Timer0). Pin 13 doesn't even do PWM on the Uno, which is something I should have caught on day one. After those two-line changes the motors ran cleanly.

The CLAUDE.md kept growing. ROS2 topic naming got a section the first time I asked for a node. Safety constraints went in — ignore forward commands when the HC-SR04 reads under 20 cm, full stop and reverse under 15 cm. Coding rules went in — no Timer1, no delay() in loop(), all serial debug at 9600 baud. One new line per painful lesson. It's around 120 lines now.

What I find interesting is that some of those lines were not written by me. When I first asked Claude to set up the harness, I pointed it at the NewPing and Adafruit Motor Shield GitHub repos. It read both, decided on its own that NewPing's async ping pattern was worth noting (avoid blocking delays), and added "watch for AFMotor timer conflicts" as a constraint after reading the Adafruit code's timer usage. I didn't comb the datasheets and write that up. The model read the source and made the call.

The payoff came a few days later. My son's first line typed straight into Claude Code was:

"Make it stop when the ultrasonic sensor reads under 20 cm."

Grammar didn't matter, completeness didn't matter, his own words were the rule. One sentence in, Claude Code generated the sketch. Because the HC-SR04 pin map lived in CLAUDE.md, the Trig and Echo pins came out right. Because the 20 cm threshold lived in the safety constraints, the logic followed it.

He saved the file. I helped with the Arduino IDE upload. We put a hand 20 cm in front of the robot. It stopped, exactly there.

"It works!"

A twelve-year-old just stopped a robot with one natural-language line. He didn't need to memorize the pin map; it was in the harness. He didn't need to know why we chose one library over another; the context was in the harness. He only had to describe the behavior he wanted, in his own words.

That was the fourth wall. In saju, the wall was repeating the same explanation until it broke me. In tarot, the wall was needing to name my priorities before any choice could be made. In the visual novel, the wall was the floor dropping out one step under the surface. In the robot, the wall was the model writing confident code without knowing a constraint I assumed it knew.

The shapes were not identical. The spot the foot fell into was.

The same spot, four times

I had four projects, all very different, all walking me into roughly the same hole. Once that pattern was visible, three things became hard to unsee.

What I noticed first was that every single project needed the same domain knowledge re-explained at the start of every new session. True solar time, yajasi, and daylight saving for saju. "Only use the 1909 plates" for tarot. The layered emotion model for the visual novel. The pin map and library reasoning for the robot. The first time, Claude helped me arrive at each of these. After that, if the decision wasn't written down somewhere persistent, the next session started from zero.

The fix was the same shape four times: externalize. The 35-page domain model document for saju. The 120-line CLAUDE.md for the robot. The static interpretation data for tarot. All pointed in the same direction. Don't make the model remember; write it down outside, and load it on the way in.

The other thing that kept showing up: in every project, the model produced confident code without knowing a constraint I'd assumed was visible. The Timer1 conflict is the cleanest example. The code was fine in isolation; it just didn't know there was a servo on the board. The first calendar-conversion code I got for saju was a standard lunar-solar conversion; it just didn't know which school's yajasi rule I had picked. The model wasn't wrong, it was uninformed. The hard part is that it doesn't tell you it's uninformed. Code written without that information looks the same as code written with it. AI-written code usually looks cleaner than mine, and cleanliness suppresses suspicion. Clean-but-wrong is exactly the kind of thing you only notice at one in the morning when one wheel won't spin.

And the part I hadn't expected: domain surface gets caught fast, but one level deeper the answers flatten. Saju's whole shape arrived in ten minutes; but "given this school, how does this constraint change?" still needed me to work out inside my head. Tarot's structure landed in one reply; but "given my cost and latency, which option is mine?" needed me to state the priorities first. The emotion model went two layers deep, but "why does this work on a person at all?" came back as platitudes. The robot's code patterns were standard; but "how does this interact with the other library on my board?" needed me to surface the conflict.

That spot, one level below the surface. Four times, the foot stopped there.

Sitting with this for a while, I came to a different reading. The wall isn't a flaw in the tool. The wall is built into where the tool ends and the project begins. The tool carries the general case. I carry the specifics of my project. If those two don't meet in the same place, the foot always falls into the same hole.

I don't think better models close this gap. They'll get the surface faster. They'll write more precise code. But which other libraries live on my board, which school of saju I committed to, how much my user cares about the immediacy of flipping a tarot card — these aren't things a model can know unless I tell it, and I can't tell it consistently unless I've written it down somewhere I can re-load.

The place where the foot stopped wasn't a wall. It was the meeting point. The tool ends, my project begins.

What changed for me wasn't the meeting point. The meeting point is the same. What changed was that I could see it before I walked into it. Before, a two-hour debug at one in the morning made me angry. After, the same two hours felt different — a diagnosis arrives first ("ah, this context never made it in"), and the work becomes context-engineering rather than debugging. Same hours, different weight.

So what do you do about it?

After the fourth time, I stopped starting from scratch.

The 35-page domain model for saju. The static data layer for tarot. The 120-line CLAUDE.md for the robot. The emotion-model document I'm currently extracting from the visual novel project. Four different shapes. Same job: write the project's decisions down somewhere outside the model, and reload them on every session.

Once you've done it once, the next time your foot approaches a similar spot it lands differently. You don't stop there; you notice "ah, this part hasn't been externalized yet" and move on. The wall, once visible, stops being a wall and becomes the next item on the list.

I'd been hand-rolling the same scaffolding every project. Pin map table, library-choice rationale, behavior constraints, coding rules. After a while I started seeing the same shapes repeat. So I'm pulling that out into a reusable pattern, so the next project doesn't have to build it from zero.

One more thing. The wall I hit in the visual novel doesn't yield to externalization. "Why do people feel real emotions in fictional relationships?" isn't an answer I can write down because I don't have it. That one has to be worked out somewhere else — by replaying games I used to love, or sitting with my son's "why?" until something shifts. Recognizing that some walls are not the same wall is part of the work too.

This post isn't going to make your one-in-the-morning debugging session any shorter. What I'm curious about is this: when you work with AI, where do you keep stopping? Does it look like a different spot every time, or like the same spot wearing different clothes? And once you started seeing the spot, where did the next step go?

The next post is going to dig into one of these specifically: the way context disappears between sessions, and what to do about it.

댓글

댓글 쓰기